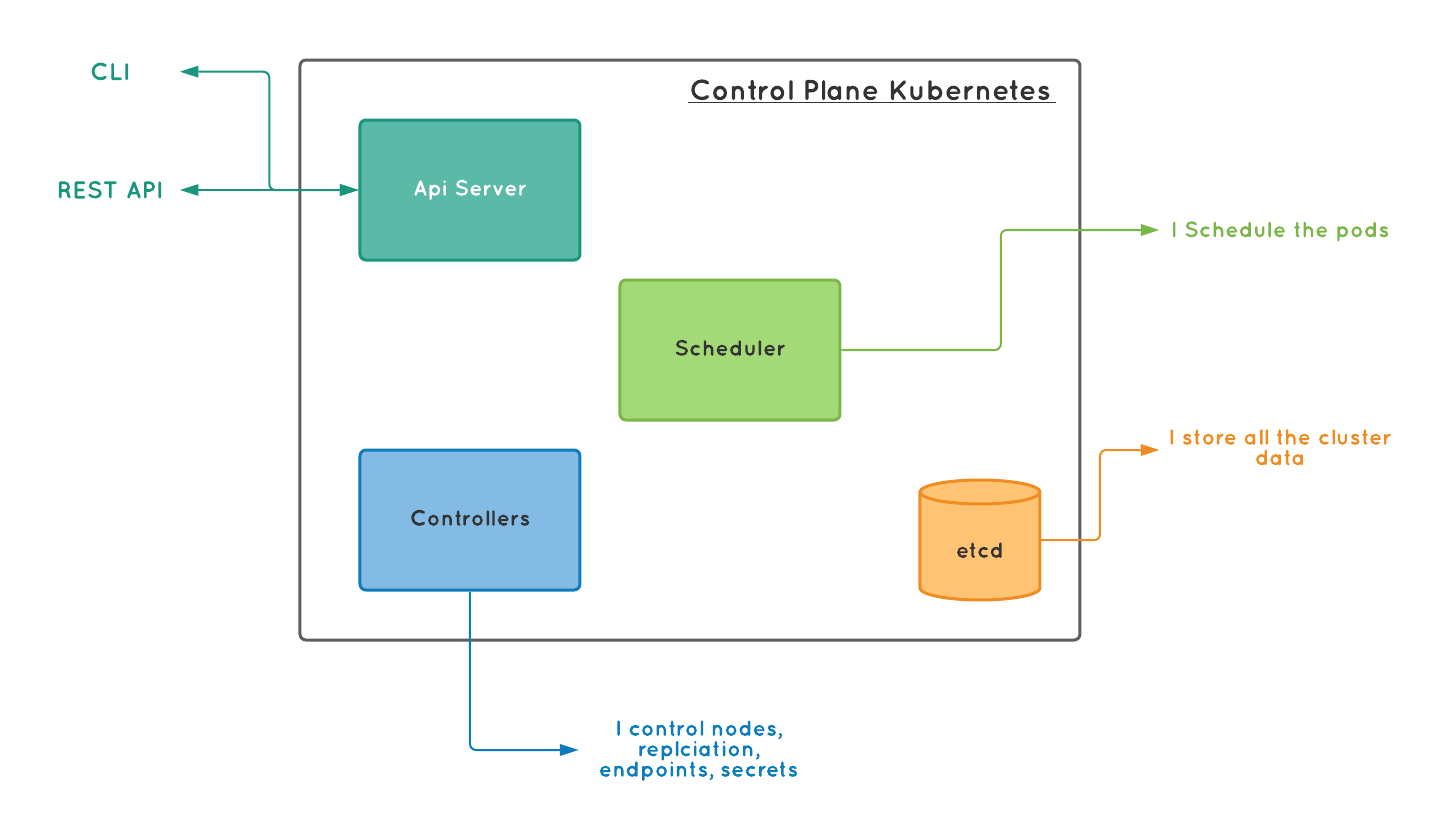

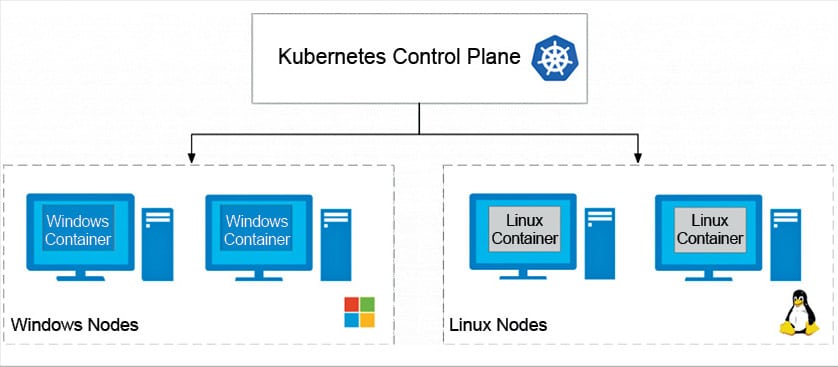

While Qovery's control plane is essential for managing Kubernetes clusters, it's important to note that the infrastructure still runs even if the control plane is unavailable. Qovery Control Plane AvailabilityĪvailability is one of the most important aspects of any cloud infrastructure, and Qovery is no exception. This instance uses the v17 LTS version of the JVM (Java Virtual Machine) and Kotlin 1.8. The Core runs on an instance in the AWS us-east-2 region with 4GB of RAM and 4vCPU. Most of the Qovery Control Plane operations are handled by what we call the Core, written in Kotlin (I will explain why we decided to use it in a future article). This means that Qovery can handle large-scale deployments with ease without worrying. Each sliced color is a new release of the Core. Here is the Core load average on the last 30 days. Most of the Qovery Control Plane operations are handled by what we call the Core - written in Kotlin (JVM based). The control plane then interprets, transforms, and forwards the requests to the appropriate Kubernetes cluster. In Qovery's case, the control plane is written in Rust and Kotlin and handles API requests from Git providers and web and CLI interfaces. The control plane is a core component of Kubernetes that manages the state of the cluster and communicates with the API server to perform operations such as deploying and scaling applications. Qovery uses a unique architecture to manage thousands of Kubernetes clusters with a single control plane. Qovery also allows access to your infrastructure and Kubernetes cluster if needed, giving you full control and flexibility over your deployment. With Qovery, you can confidently deploy your applications, knowing that the platform takes care of the underlying infrastructure and provides the tools you need to manage your deployment. Qovery abstracts away the complexity of Kubernetes, allowing developers to focus on writing code and delivering value to their customers. Whether you're a junior, experienced, or senior developer, Qovery makes deploying your applications to the cloud using Kubernetes a breeze. Qovery enables companies to deploy their applications on Kubernetes with minimal effort, reducing the time and costs associated with infrastructure management. Qovery is particularly useful for companies that want to leverage Kubernetes for their applications but don't have the expertise or resources to manage the infrastructure. What's missing here? Or how else is removing a control plane node different from removing a worker node? Pointers are appreciated.Qovery runs on top of Kubernetes, and on top of your cloud account. The connection to the server master1:6443 was refused - did you specify the right host or port? To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

Please, check the contents of the $HOME/.kube/config file.Įrror from server: etcdserver: request timed out The reset process does not clean your kubeconfig files and you must remove them manually. If your cluster was setup to utilize IPVS, run ipvsadm -clear (or similar) If you wish to reset iptables, you must do so manually by using the "iptables" command. The reset process does not reset or clean up iptables rules or IPVS tables. The reset process does not clean CNI configuration. Deleting contents of stateful directories: Deleting contents of config directories: Unmounting mounted directories in "/var/lib/kubelet" W0811 10:24:51.487912 7159 removeetcdmember.go:79] No kubeadm config, using etcd pod spec to get data directory WARNING: Changes made to this host by 'kubeadm init' or 'kubeadm join' will be reverted.

W0811 10:24:49.750898 7159 reset.go:99] Unable to fetch the kubeadm-config ConfigMap from cluster: failed to get node registration: failed to get corresponding node: nodes "master2" not found FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml' Master2 Ready,SchedulingDisabled master 19h v1.18.6 WARNING: ignoring DaemonSet-managed Pods: kube-system/calico-node-hns7p, kube-system/kube-proxy-vk6t7 $ kubectl drain master2 -ignore-daemonsets In this setup I want to scale down from two to one control node but end up rendering the cluster unusable: $ kubectl get nodes When I have a kubernetes cluster with multiple control nodes and delete one of these, the whole API server does not seem to be available anymore.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed